Digital twin models promise advances in computing

Systems controlled by next-generation computing algorithms could give rise to better and more efficient machine learning products, a new study suggests.

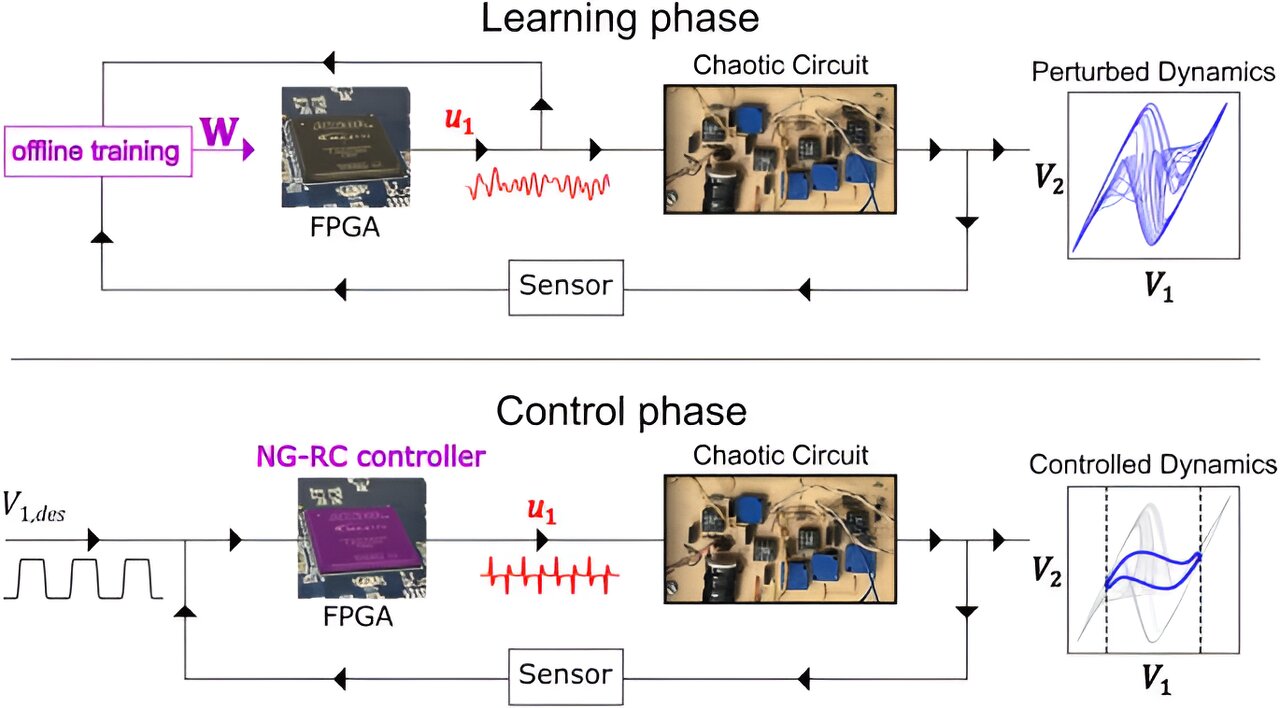

Using machine learning tools to create a digital twin (a virtual copy) of an electronic circuit that exhibits chaotic behavior, researchers found that they were successful at predicting how it would behave and at using that information to control it.

Many everyday devices, like thermostats and cruise control, utilize linear controllers—which use simple rules to direct a system to a desired value. Thermostats, for example, employ such rules to determine how much to heat or cool a space based on the difference between the current and desired temperatures.

Yet because of how straightforward these algorithms are, they struggle to control systems that display complex behavior, like chaos.

As a result, advanced devices like self-driving cars and aircraft often rely on machine learning-based controllers, which use intricate networks to learn the optimal control algorithm needed to operate efficiently. However, these algorithms have significant drawbacks, the most demanding of which is that they can be extremely challenging and computationally expensive to implement.

Now, having access to an efficient digital twin is likely to have a sweeping impact on how scientists develop future autonomous technologies, said Robert Kent, lead author of the study and a graduate student in physics at The Ohio State University.

The work is published in the journal Nature Communications.

“The problem with most machine learning-based controllers is that they use a lot of energy or power and they take a long time to evaluate,” said Kent. “Developing traditional controllers for them has also been difficult because chaotic systems are extremely sensitive to small changes.”

These issues, he said, are critical in situations where milliseconds can make a difference between life and death, such as when self-driving vehicles must decide to brake to prevent an accident.

Compact enough to fit on an inexpensive computer chip capable of balancing on your fingertip and able to run without an internet connection, the team’s digital twin was built to optimize a controller’s efficiency and performance, which researchers found resulted in a reduction of power consumption. It achieves this quite easily, mainly because it was trained using a type of machine learning approach called reservoir computing.

“The great thing about the machine learning architecture we used is that it’s very good at learning the behavior of systems that evolve in time,” Kent said. “It’s inspired by how connections spark in the human brain.”

Although similarly-sized computer chips have been used in devices like smart fridges, according to the study, this novel computing ability makes the new model especially well-equipped to handle dynamic systems such as self-driving vehicles as well as heart monitors, which must be able to quickly adapt to a patient’s heartbeat.

“Big machine learning models have to consume lots of power to crunch data and come out with the right parameters, whereas our model and training is so extremely simple that you could have systems learning on the fly,” he said.

To test this theory, researchers directed their model to complete complex control tasks and compared its results to those from previous control techniques. The study revealed that their approach achieved a higher accuracy at the tasks than its linear counterpart and is significantly less computationally complex than a previous machine learning-based controller.

“The increase in accuracy was pretty significant in some cases,” said Kent. Though the outcome showed that their algorithm does require more energy than a linear controller to operate, this tradeoff means that when it is powered up, the team’s model lasts longer and is considerably more efficient than current machine learning-based controllers on the market.

“People will find good use out of it just based on how efficient it is,” Kent said. “You can implement it on pretty much any platform and it’s very simple to understand.” The algorithm was recently made available to scientists.

Outside of inspiring potential advances in engineering, there’s also an equally important economic and environmental incentive for creating more power-friendly algorithms, said Kent.

As society becomes more dependent on computers and AI for nearly all aspects of daily life, demand for data centers is soaring, leading many experts to worry over digital systems’ enormous power appetite and what future industries will need to do to keep up with it.

And because building these data centers as well as large-scale computing experiments can generate a large carbon footprint, scientists are looking for ways to curb carbon emissions from this technology.

To advance their results, future work will likely be steered toward training the model to explore other applications like quantum information processing, Kent said. In the meantime, he expects that these new elements will reach far into the scientific community.

“Not enough people know about these types of algorithms in the industry and engineering, and one of the big goals of this project is to get more people to learn about them,” said Kent. “This work is a great first step toward reaching that potential.”

Other Ohio State co-authors include Wendson A.S. Barbosa and Daniel J. Gauthier.

More information:

Robert M. Kent et al, Controlling chaos using edge computing hardware, Nature Communications (2024). DOI: 10.1038/s41467-024-48133-3

The Ohio State University

Citation:

Controlling chaos using edge computing hardware: Digital twin models promise advances in computing (2024, May 9)

retrieved 11 May 2024

from

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no

part may be reproduced without the written permission. The content is provided for information purposes only.

link